Build a budget Deep Learning workstation Part Zero: random tidbits

Backstory

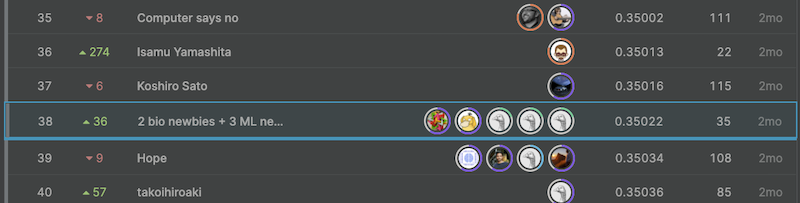

Back in early 2019, I got back to machine learning after a 5-year hiatus. The reason is that I have to teach an undergrad class introducing some basic Python programming for data science: Math 10 at UC Irvine1. Before that, the last time I had coded in Python was for the deep learning class at Coursera back in 2014. I felt that the community had evolved to a remarkable new height that helped me pick Python back up quickly. After I hosted several in-class Kaggle competition myself for Math 102 3, I decided to try some Kaggle’s featured competitions myself and got my hands on some real challenges against the world’s best data scientists. I participated 5 competitions total, among which 4 competitions that I actually dug into and spent time thinking about the solutions. Results? 2 silver medals and 2 bronzes. For a beginner like myself, it is not bad I guess.

Above is the boring part about the backstory, and every story begins with a “however”. While I enjoyed free-loading the Tesla P100 GPU on Kaggle’s cloud in training some of the most intricate and complicated networks such as transformers, I got frustrated with Kaggle starting limiting users’ concurrent GPU sessions as well as total GPU time since 2019 summer.

The last straw actually is a duplex.

One was a competition I got into recently on how to predict mRNA’s stability for COVID-19 vaccine development hosted by Stanford 4. We got a silver eventually, yet I felt that the idea testing/verification and model trimming cycles got frustratingly slow due to the GPU time limit Kaggle imposed. In this competition, I started having an increasing urge to build a deep learning PC to have a local capable rig at hand, or rather, I gradually became more and more delusional that I am a power GPU away from getting a Kaggle gold as many of intuitive idea stemmed from myself have a striking resemblance with the ones documented from those gold medalists.

The other one is a competition I am currently in: the Riiid AI Education Challenge 2020, and the winner will be invited to present their models at the AAAI-2021 Workshop on AI Education 5. Despite Kaggle’s GPU memory increased from 13GB last year to 16GB right now, I am constantly encountering out-of-memory error during training, and this may happen hours later of a cloud kernel commit that I found the OOM error through reading the logs.

Moreover, this competition is a so-called “code challenge”. In Kaggle competition normally for the test set it is just the target being unknown, which participants train models aiming to infer. In a code challenge, your submission is just codes, not actual predictions, and your codes need just to pass the sanity check of a small public test set, in the end it will be put to test against an undisclosed private test set that even the data are unknown. While it sounds quite fair for everyone, as it is a pure challenge on programming skills not hardware. The caveat is that if one can train a fast (GPU run time limit for a single submission) and good model locally, one can have a tremendous edge over other participants because the final submission can load a model stored on Kaggle cloud, then only perform the inference but not the training on cloud.

So I decided to build a machine learning rig with a powerful GPU myself.

Budgeting and picking parts

This September, I got excited with Chef Huang announcing the new RTX 3000 series. Even though all the cards are targeted for PC gaming, among them RTX 3090 is essentially an RTX Titan upgrade but \$ 1000 cheaper, while RTX 3080 is an upgraded version of 2080 Ti yet \$ 500 cheaper. I guess Nvidia has to price the products competitive to battle against the new AMD-based gaming consoles.

Moreover, as AMD has been stomping Intel for quite a while with the Zen CPUs, Intel has to ramp up the core counts in the newer generation of Core CPUs, in whose lineup there are some most affordable 8- and 10-core CPU available for people relying on MKL (math kernel lib) to complement their day job. The 10th gen Comet Lake desktop CPU is like Intel’s swan song to the 14nm++++++++++++++ process which has been milked till the last drop. There is never been a better time to buy Intel CPU for scientific computing purposes as Intel might again increase the price if 11th/12th gen Core retakes the crown in gaming/research.

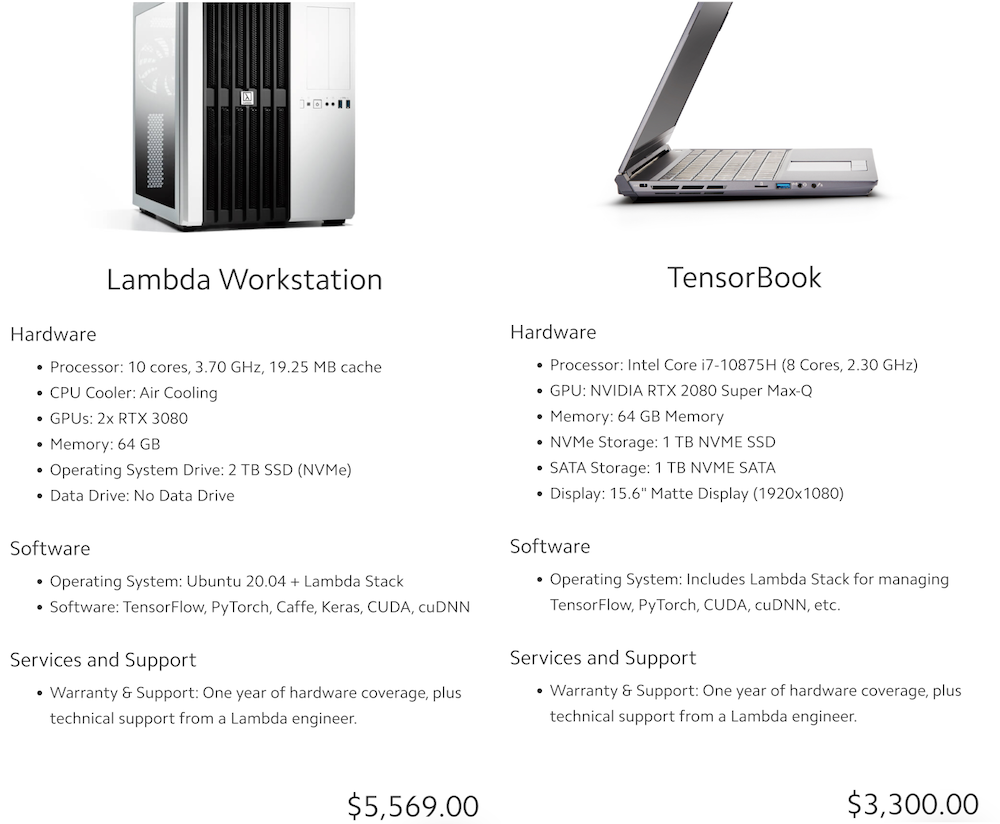

At first, I was looking at the dual-GPU 3080 workstations at Lambda Labs. However, I consulted our department’s secretary in charge of the funding, apparently I cannot use a National Science Foundation grant money to buy one because it is not budgeted. This means that I have to use my own money to get one. The dual-GPU workstations, well exceeded $5000 even at the lowest config possible, almost doubling my budget. So in the end, I decided to get a single GPU budget-build based on the RTX 3090.

After some simple Google searches, I stumbled upon Tim Dettmers’s guide on how to choose GPU6 for deep learning. The current main prototyping machine I have is actually one of the

best price/performance’t one (GTX 1060, although I had the max-Q version). I started run into bottlenecks of training graph convolutional neural nets in FP32 with even merely like 1.5 million-2.5 million parameters back in 2019. I followed partially his guide and picked the parts for a single-GPU build on a website called pcpartpicker, which automatically estimates the total wattage needed and sizing compatibility of the parts (whether the case has enough room for fans, whether the fan would block the RAM, etc). The core components include:

- Intel 10th 10850k: a 10-core 20-thread big boy, essentially a 10900k (best non-x i9 for enthusiasts).

- A big air cooler: originally I wanted Noctua D15 black version but could not find one, ended up getting Scythe Fuma 2.

- Asus TUF gaming Z490 motherboard with WiFi.

- Cheapest DDR3200 ram (two 32G modules).

- An NVME m.2 SSD with a data HDD.

- Case with good ventilation with lots of fan mounting options (Fractal Meshify C).

- Whatever RTX 3090 non-blower edition I could get, the lower the price the better.

Overall the cost is about \$2800 if I managed to get one from Asus TUF, Gigabyte Eagle, or MSI Ventus. It is worth noted that, two years ago, with 9900x (10 cores) and Titan RTX (24G VRAM), the cost of a similar rig would exceed \$5000 easily, not mentioning the cooling of Titan RTX is known to be atrocious.

Where to get a 3090?

After budgeting and picking parts, I just realized a serious problem:

How the heck am I gonna get a 3090?????

I checked every single retailer website (Newegg, Amazon, Best Buy, B&H), not a single 3090 card is in-stock. Even those that are \$ 300 more expensive (vs the 3090 Founder’s Edition) with hideous RGB LED lighting were totally gone. Ebay scalpers are selling the card \$ 1000 more than the listed prices.

After some Google search, I found a website called CyberpowerPC which sells Gaming PCs have all 3080s and 3090s “in-stock”. You can customize a “build” on their website, however you have no clue which brand’s 3090 you will get, though I had to cut corners here and there to meet my $2800 budget since it has certain pre-built premium.

Figure: CPU downgrades to 10700, RAM downgrades to 32G, and CyberpowerPC charges the customer for making the wiring “professional”…

Only two weeks later, after I created my order, I realized (duh…) that CyberpowerPC was selling futures, as they do not actually have a 3090 “in-stock”. There was no indication of my build starting assembling, which was confirmed by the customer service’s answer to my inquiry.

Feeling frustrated, I cancelled my order and set some Newegg notifications for the GPUs from its official app. Surprise, surprise, I got two in-stock alerts for the 3090s the first day I installed the app! Yet in both cases, I clicked the notification only to find the drop had been sold out already. Upon more Googling on how to buy a limited stock item, I found that there are many scalpers with web crawling bots’ helping them add things to cart. While doing the research about the scalpers, ironically I happened to see on Newegg’s twitter that “Bot protection was in place, orders were human” …oh well.

Feeling desperate, I came up with an idea of using some Python web crawling tool to detect if there is an “Add to cart” button (text with an image overlay to be precise). Upon more Googling, I found this tool called Scrapy, using a few try...except... blocks together with some sample copied from StackOverflow, now I have a code ready to extract certain cards’ and combo’s “add to cart” on Newegg using Scrapy’s xpath interface, and will send me an sms whenever certain criterion is met. Since I only got some spare time with computers at hand after my boy went to bed, I did not feel the urge to deploy the code on a cloud platform like GCP or AWS to run 24/7, I just run the code locally and save the garbage by-product in an external drive. Guess what, the first night this “add to cart” detector was deployed, I was lucky enough to get a Gigabyte Eagle RTX 3090 card from Newegg, only to find that the Newegg official app’s notification is about 2 minutes slower.

Finally, the wait is over, and it is time to start building!

Andromeda: Guardian of the Gigabyte

Other parts in this “building a budget Deep Learning workstation” series

- Part One: Building: assembling the parts and how to deal with the bent of the big and heavy RTX 3090.

- Part Two: software and CUDA: we will learn how to install CUDA and PyTorch, and how to config Ubuntu so that the GPU is NOT used for graphics displaying but only for CUDA computing.

- Part Three: undervolt the GPU: we will see how to config the Ubuntu to achieve an undervolt effect on GPU, thus making the system more stable. This serves our need of training of models for a longer period of time.

- Bonus part: productivity tweak on Ubuntu for MacOS users: as a long time MacOS user, we will learn how to config an almost MacOS like keyboard on Linux.

-

UC Irvine Math 10 Spring 2019 Is your algorithm fashionable enough to classify sneakers? https://www.kaggle.com/c/uci-math10-spring2019 ↩

-

UC Irvine Math 10 Winter 2020 Can your algorithm read ancient Japanese literature? https://www.kaggle.com/c/uci-math-10-winter2020 ↩

-

Stanford University OpenVaccine: COVID-19 mRNA Vaccine Degradation Prediction https://www.kaggle.com/c/stanford-covid-vaccine ↩

-

Riiid! Answer Correctness Prediction Track knowledge states of 1M+ students in the wild https://www.kaggle.com/c/riiid-test-answer-prediction ↩

-

Which GPU(s) to Get for Deep Learning: My Experience and Advice for Using GPUs in Deep Learning https://timdettmers.com/2020/09/07/which-gpu-for-deep-learning ↩

Comments