Build a budget 3090 deep learning workstation Part Three: undervolt an RTX GPU on Ubuntu

Other parts in the “Build a budget ML workstation”

- Part Zero: Random tidbits: a newbie’s story to build a budget machine learning workstation.

- Part One: Building: assembling the parts and how to deal with the bent of the big and heavy RTX 3090.

- Part Two: software and CUDA: we will learn how to install CUDA and PyTorch, and how to config Ubuntu so that the GPU is NOT used for graphics displaying but only for CUDA computing.

- Bonus part: productivity for MAC users: as a long time MacOS user, we will learn how to config an almost MacOS like keyboard on Linux.

How to undervolt the GPU on Ubuntu

On Windows there is the famous MSI Afterburner to dial down the voltage of the GPU, so that the overall heat will be significantly reduced, yet the performance takes only minor hit because the clock of the GPU will be higher.

On Ubuntu there is no native Afterburner, but we can config things to achieve something similar. First edit or create /etc/rc.local, and add the following to the file:

#!/bin/bash

sudo nvidia-smi -i 0 -pl 280

exit 0

Note #!/bin/bash part is necessary and -pl 280 means power limit being 280W (the stock power is 350W).

Next step is to set a frequency offset to 105Mhz, so that during active computing, the GPU core will be kicking off and running at a frequency of 105Mhz higher than designed. Again, adding the following script as a startup application will do the magic:

nvidia-settings -a '[gpu:0]/GPUGraphicsClockOffset[4]=105' -a '[gpu:0]/GPUGraphicsClockOffset[3]=105' -a '[gpu:0]/GPUGraphicsClockOffset[2]=105'

The result is promising, using the default tensorflow CNN benchmark default setting:

python3 tf_cnn_benchmarks.py --model resnet50 --batch_size 64

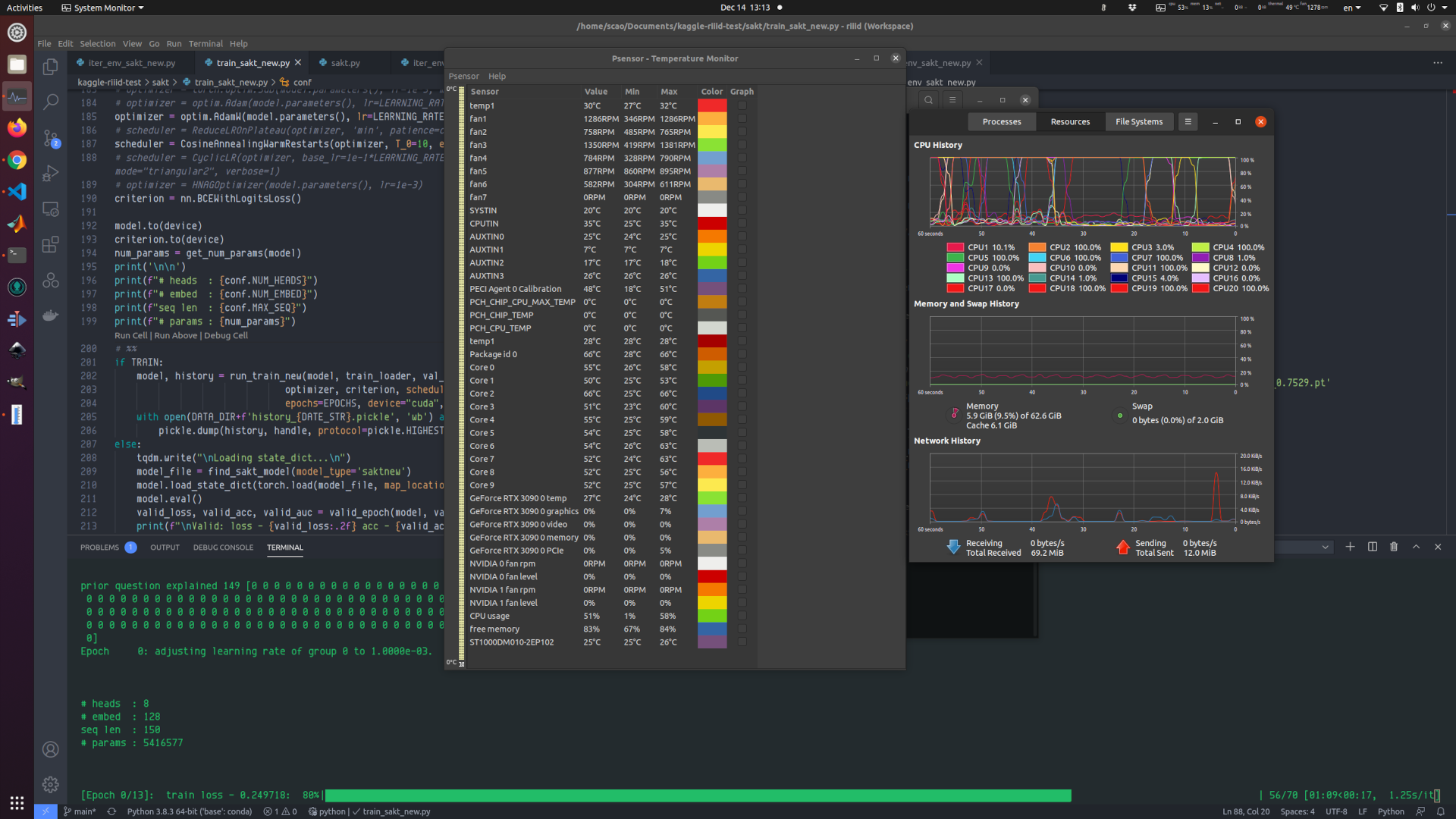

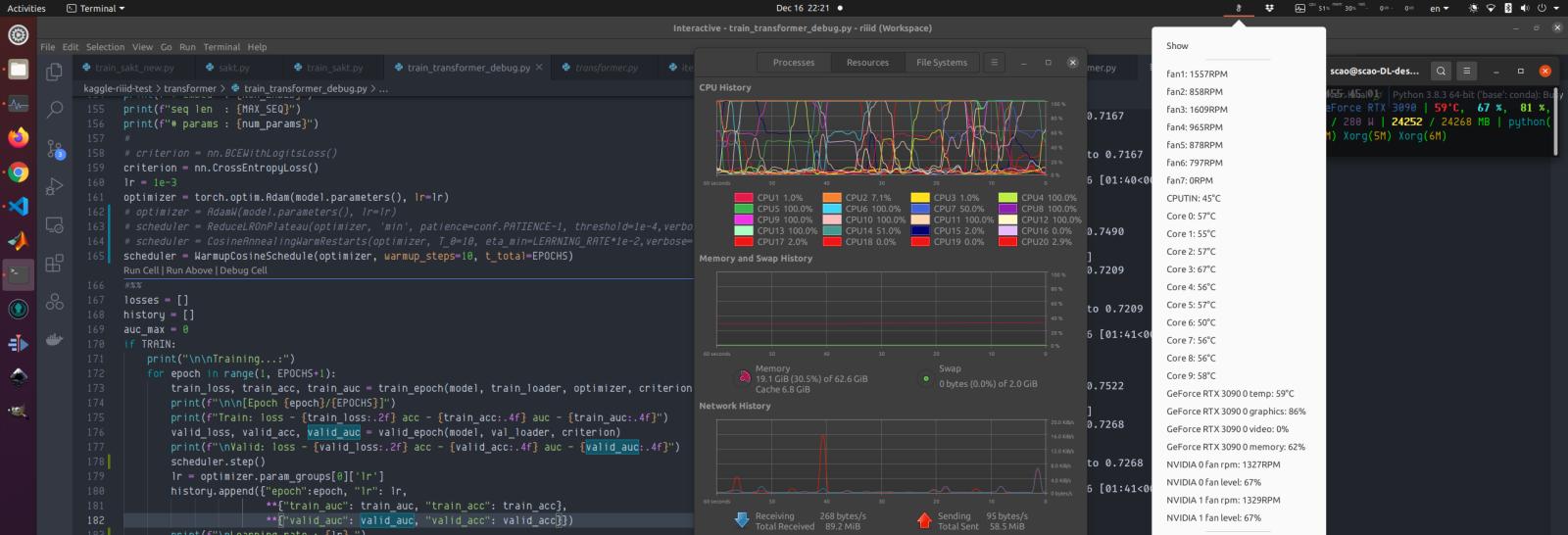

The 280W/105Mhz offset can do 447 images/sec, while the stock 350W setting is at 471 images/sec. The performance hit is about 5%, yet the peak power is down 20%. The GPU can now sustain running at a relatively low temperature (55-62 degrees Celsius when being at full load) for an extended period of time (1 or 2 days non-stop). The sacrifice is totally worth it.

Comments